|

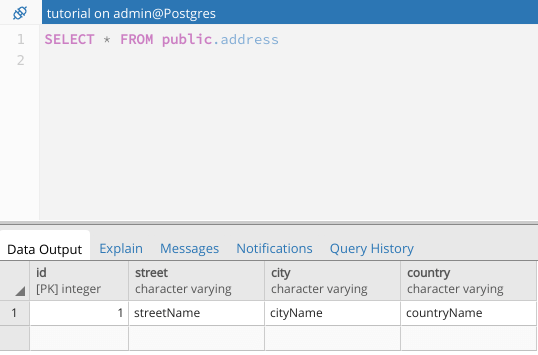

Ich kann diese Zustimmung jederzeit widerrufen. Ja, ich möchte regelmäßig Informationen über neue Produkte, aktuelle Angebote und Neuigkeiten rund ums Thema PostgreSQL per E-Mail erhalten. If you want to learn more about COPY, checkout the PostgreSQL documentation. If you want to learn more about PostgreSQL and loading data in general, check out our post about rules and triggers. As you can see it is pretty simple to combine those features in a flexible way. Before the data is imported again it is uncompressed and again filtered. In this case I decided to compress the data while exporting. In some cases, you might want to do more than to just export data. Then we export the content of this table to a file:įinally, we can try to import this data again:ĭb12=# COPY t_import FROM '/tmp/file.txt' WHERE x /tmp/' ĭb12=# COPY t_import FROM PROGRAM 'gunzip -c /tmp/'ĭb12=# SELECT * FROM t_import WHERE x >= 100 SELECT * FROM generate_series(1, 1000) AS id įirst of all 1000 rows are generated to make sure that we got some data to play. To show you, how the new WHERE clause works, I have compiled a simple example: The COPY become is pretty flexible and allows a lot of trickery. In PostgreSQL data can be filtered while importing easily. COPY … WHERE: Applying filters while importing data However, in some cases this has been a problem: More often than not people only wanted to load a subset of data and had to write a ton of code to filter data before the import or once data has been written into the database already. What is the purpose of this new feature? So far it was possible to completely import a file. While having a link to the documentation around is certainly beneficial, the WHERE condition added to PostgreSQL 12 might even be more important.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed